Gamble Expectation and the Ergodicity Conundrum

I remember reading a paper by Ole Peters [1] a few years ago with some questionable claims according to commentators in a popular blog. A recent tweet by Ole Peters ignited a long thread regarding the soundness of the following statement by Chernoff and Moses in Elementary Decision Theory (1959), p.98, as stated below:

In his paper, Ole Peters writes:

In my opinion, Chernoff and Moses (CM) are not making any statement about ergodicity. Any such assumption may lead to a straw man argument. But before we start it is necessary to state some definitions:

The simplest definition I can offer for the expectation of a random variable x is that it is the value of x obtained on the average at the limit of sufficient samples. This is also called the mean of the random variable x and it is denoted by E{x}.

Note that the average is based on numeric values the random variable x takes. This is important and just make a note of that because it will be used later.

Assume that we repeat an experiment n times and we observe the outcomes z1, z2,…zn. For each outcome, the random variable x assumes values x(z1), x(z2),…x(zn). If n is sufficiently large, then

E{x} = (x(z1) +x(z2) +…+x(zn))/n (1)

which is also called the frequency interpretation of expectation [2]. In general, the expectation equation for a discrete random variable x taking values xn with probability pn is given by the following equation:

Example

Consider the following gamble: A fair coin is tossed. You gain $0.50 if heads and lose $0.40 if tails. Calculate the expectation.

Note that according to our definitions above:

z1 = heads, z2 = tails

p{z1} = p{z2} = 0.5

x(z1) = $0.50, x(z2) = -$0.40

According to Eq. 2, the expectation is then:

E{x} = 0.5 x $0.50 -0.5 x $0.40 = $0.05

Therefore, we can say that this gamble wins $0.05 on the average assuming it is repeated many times (sufficient sample), as per CM above.

One issue with this and related gambles is that it takes a sufficient sample for the average in Eq. 1 to converge to the expectation value in Eq. 2. Given capital constraints, ruin may occur before the expectation converges to its theoretical value. There is a whole science that attempts to measure risk of ruin and minimize it while maximizing wealth growth. I will only mention the Kelly criterion by Ed. Thorp.

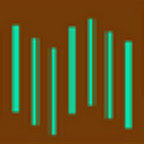

Below is an example of one random path taken before the expectation converges in the above gamble. The Y-axis is the average of the random variable values and the X-axis shows the number of repetitions (tosses.)

It may be seen that for this particular random sequence of outcomes, the average first drops near -$0.20 before slowly converging to $0.05 after about 400 tosses. Note that convergence to the expectation of additive repetition gambles is usually slow and requires a large sample.

P=P

In essence, the statement by CM challenged by Ole Peters is a tautology: if the expectation of a gamble is favorable (positive and sufficiently large) and you have the choice of repeating the gamble many times (sufficient sample), eventually wealth increases (assuming no ruin in the meantime). But to start with, positive expectation is a statement about a positive value of a random variable on the average for a sufficient sample. Therefore, wealth increases necessarily, again assuming there is enough starting wealth to absorb intermittent ruin conditions before averages converge to expectation. It should be clear that CM’s statement is the definition of expectation and nothing more than that. However, Ole Peters attempts to challenge it by invoking ergodicity. Let us try to see how and whether the rebuttal is valid.

Ole Peters uses an example of a gamble for which he asserts that it constitutes an exception to CM statement and therefore violates its universality. This example has been restated in numerous blogs and articles since and there is the perception that it indeed violates the statement by CM. This gamble was also included in the Twitter thread mentioned before. Ole Peters writes:

This is the gamble proposed by Ole Peters that supposedly violates CM:

You start with $1 in a fair coin game. Heads multiply wealth by 1.5 (you win 50% of your wealth) and tails multiply wealth by 0.6 (you lose 40% of your wealth.)

Ole Peters asserts that in this gamble the ensemble average, which he equates to the expectation defined by CM, is not equal to the time average and therefore ergodicity is violated. In fact, Ole Peters asserts that he has broken a tautology in probability theory. This would be a major breakthrough, but actually it is not. The whole argument is based on conflating the mean arithmetic return factor with the expectation of the gamble. What do I mean by that?

0.5 x 1.5+ 0.5 x 0.6 = 1.05 (3)

is not the expectation, or ensemble average, as Ole Peters defines it, but the mean arithmetic return factor (1+r). Below is what I wrote after defining expectation that I asked the reader to make a note of:

Note that the average is based on numeric values the random variable x takes.

However, 1.5 and 0.6, the arithmetic return factors, are not the numeric values the random variable takes but multipliers of wealth. The numeric values depend on the wealth at steps n and n-1 of the repetition. Therefore, Eq. (3) is not the expectation but the mean arithmetic return factor.

Furthermore, we all know that the geometric return G is less or equal to the mean arithmetic return A. The first order approximation is as follows:

G = A -V/2 (4)

For gambles such as the one in Ole Peters’ example with 50% gain for heads and 40% loss for tails, variance V is relatively large and although the mean arithmetic return is positive, the geometric return is less than 1, resulting in decaying equity over time. This is nothing new and it also has nothing to do with ergodicity or any other fancy terminology.

The expectation for the gamble in the example of Ole Peters that supposedly rebuts CM is actually 0 and there is no contradiction. Below is a graph of a random path that shows how the average of the wealth changes from step n-1 to step n, which is the proper random variable to use in this case, evolves in time. This average converges to an expectation of 0 after about 50 steps.

Below is a random wealth path

Wealth goes to zero over time because a loss of 40% needs a gain of 66.67% for recovery and 50% is not nearly enough. At the limit of sufficient samples there are as many 40% losses as 50% gains and this results in decaying wealth. Accordingly, the expectation is zero.

Chernoff and Moses survived

I would not expect anything different. If they have made such a fundamental mistake as Ole Peters asserts, the whole foundation of probability theory would be at stake. But CM just stated a tautology of the form P= P. Maybe there is a small probability they are wrong. Maybe there is another example that shows they are wrong, which I am either not aware of or will be presented in the future. We should always leave the door open to major discoveries.

Although the concept of ergodicity is important, I am not sure it is relevant to the CM case. The example by Ole Peters on which the rebuttal of CM was based used the mean arithmetic return factor in the place of the expectation. In fact, the expectation of that gamble is zero. As a result, that gamble cannot be used to argue that CM is wrong due to non ergodicity.

Also see Round Two for simulations and additional proofs.

References

[1] O. Peters and M. Gell-Mann. Evaluating gambles using dynamics. Chaos, 26:23103, February 2016. Also see:Evaluating gambles using dynamics

[2] Papoulis, A. Probability, Random Variables, and Stochastic Processes, 1965. McGraw-Hill, p. 138.

If you have any questions or comments, happy to connect on Twitter:@mikeharrisNY

About the author: Michael Harris is a trader and best selling author. He is also the developer of the first commercial software for identifying parameter-less patterns in price action 17 years ago. In the last seven years he has worked on the development of DLPAL, a software program that can be used to identify short-term anomalies in market data for use with fixed and machine learning models. Click here for more.